Solution Architecture

Module Selection: Miniature surface-mount PIR modules (e.g., HC-SR501) with size <20mm×20mm for integration into embedded lighting (ceiling lights, corridor lamps).

Linkage Logic: Connect to MCUs (e.g., ESP32) via GPIO for "person detected - full brightness; person present - dimmed; person leaves - 30s delay off" control. Integrate light sensors (e.g., BH1750) for daytime auto-shutdown.

Energy Savings: Reduces lighting energy consumption by 30%-50% compared to traditional switches, ideal for corridors and bathrooms.

Typical Scenarios

Smart Homes: Integrates with Alexa/Mijia systems, allowing APP-adjustable sensing distance and delays.

Commercial Lighting: Mall corridors use zoned sensing via RS485 bus for collaborative multi-module control.

III. Optimization of Sensing Solutions for Security Systems

Enhanced Intrusion Detection Design

Anti-Interference Technology: Dual-element complementary sensors suppress ambient temperature interference; pulse counting (2 consecutive pulses trigger alarm) reduces false positives.

Multi-Dimensional Linkage: Combines with microwave radar modules (e.g., HB100) for "infrared + microwave" dual detection, triggering alarms only when both activate to avoid curtain sway or insect interference.

Concealed Installation: Modules with integrated lens-housing designs (e.g., RE200B) hide within ceilings or decor for covert security.

Data Transmission and Response

Wireless Transmission: LoRa modules (e.g., EBYTE E32-433T30D) enable low-power long-range (3km open area) data upload, suitable for villa/factory perimeter security.

Rapid Response: <0.5s trigger delay, with local buzzer alerts and cloud notifications (via NB-IoT) within <3s.

IV. Selection and Deployment Considerations

Environmental Adaptation

Avoid direct exposure to air vents/heating sources. Install at 2.2-2.8m (indoor) or 3-5m (outdoor) height, tilted 15°-30° to cover human activity zones.

Outdoor use requires IP65 waterproof modules (e.g., AM312-IP67) with sunshades to minimize solar interference.

Cost-Reliability Balance

Consumer scenarios: HC-SR501 (~$2/unit); industrial use: Prioritize stability (e.g., Panasonic EKMC1603111, temp drift <±2%/℃).

Batch deployment: Reserve 10% redundant modules with self-diagnostic voltage checks for fault

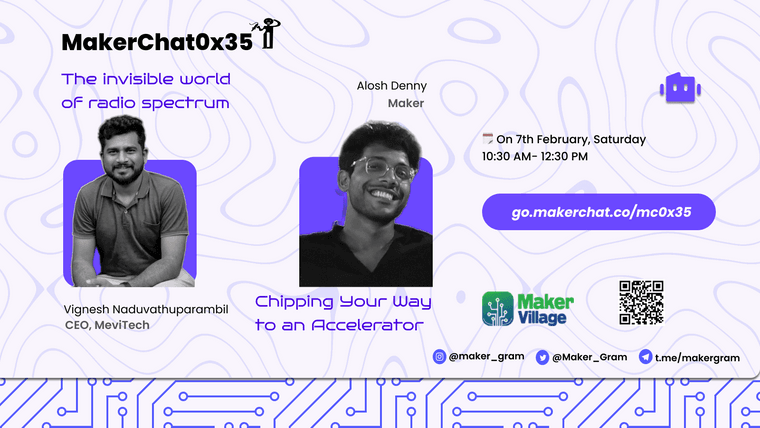

️ Limited Seats – Register Now!

️ Limited Seats – Register Now! Feb 7 |

Feb 7 |  MakerVillage

MakerVillage